The way code is written is being fundamentally changed by LLMs. This is a freight train that you cannot stop. To quote someone else: If you’re not part of the steamroller, you’re part of the road.

I’ve been working in software for close to 30 years. In that time, I’ve written code in: Fortran, C, C++, Assembler, Matlab, VBScript, Visual Basic, Go, bash, powershell, batch files, C#, F#, Java, php, perl, python, javascript, typescript, objective C, SQL, Ruby and probably a few more I’ve forgotten.

I’ve written a lot of code, I’ve read a lot more.

I’ve never seen a change on the scale of what we’re seeing with AI.

Where we’ve been

For all of my career coding the process of creating or reading code hasn’t significantly changed. It’s a developer (or engineer) using a text editor to read and write code.

Sure, there have been advances. We’ve had the invention of IDEs, which have got progressively more capable. They’ve made it far easier to navigate and modify codebases. But when you get to the bottom of it, it’s still someone looking at a bunch of code in a text editor window.

The editors have changed (even emacs and vi/vim), but the process has been the same.

Not anymore.

What can LLMs do

LLMs are changing all this. Developers are still reading code (and likely a lot more code), but they’re writing less because LLMs are generating the code.

The reality is that LLMs are really very good at generating code. They get the syntax right, they structure the code reasonably well, they’re able to create good software. Really.

What they’re not as good at is judging what to build and whether this is the right thing to build. Humans are far better at this.

Good developers (or engineers) are needed to point the LLMs in the right direction and to review what they’ve generated.

LLMs are also quite good at reading code. They’re a great assistant to exploring an unfamiliar codebase.

What this looks like as a work pattern

Obviously this is a rapidly evolving area, but at the moment the current LLMs are good at doing well defined repetitive task. Working with LLMs today feels like managing a team of tireless and enthusiastic junior developers. They can move quickly, but they need extremely clear instructions. Without guidance they often go off in the wrong direction.

In practice, the workflow looks something like this:

- Break work into small, well-defined tasks.

- Send an agent (or coding assistant) to implement the task.

- Review the output carefully.

- Fix, refine, or sometimes discard the result entirely.

The key lesson is that smaller tasks produce better results.

Some real examples

I’m going to post a more extensive set of recommendations, but for now, some real examples of how I’ve found AI coding assistants helpful in the past few months (primarily working with a large and legacy codebase)::

- refactoring code – it does a good job of completing clearly defined, repeatable tasks in the codebase

- creating unit tests – a huge amount of the work of creating unit tests is setting up mocks. Claude does this well.

- managing and creating functional tests. It can generate bdd descriptions from existing tests. It can convert tests from legacy frameworks to new ones. It can refactor tests to extract common code.

- performing code reviews. You can get the AI to look for specific issues, beyond what you get from existing tools easily. For example, you can validate that the code uses our standards for front end styling

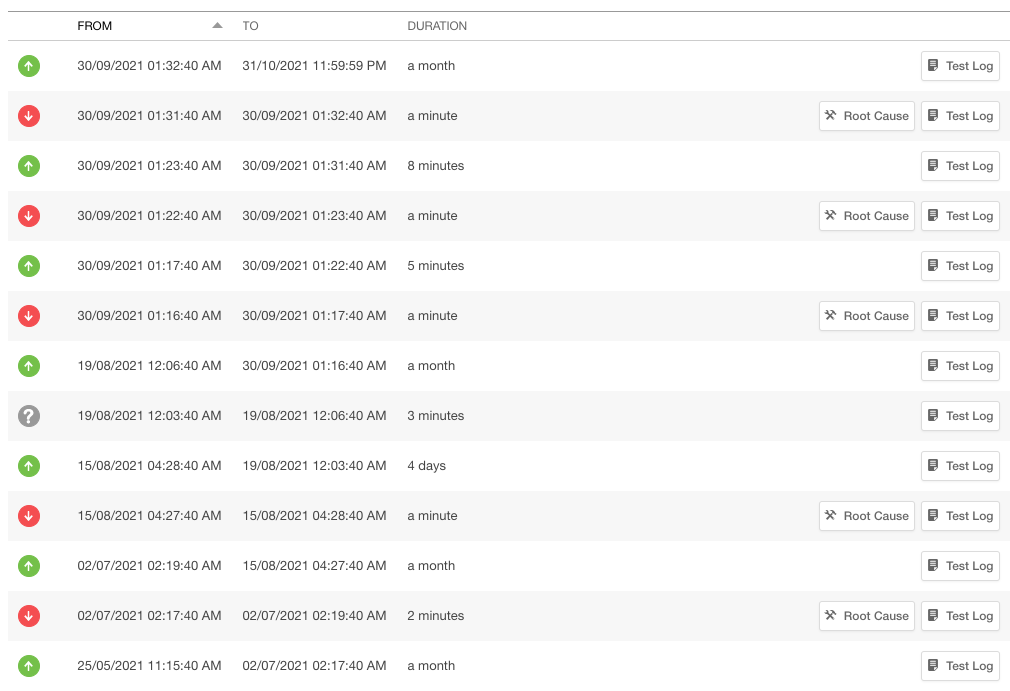

- searching for dead code – taking the logs of production website usage, then getting Claude to find out if we have code that is not being used in production.

- searching for new errors in logs – building a python script to query the error logs in production to see if there are any new errors

Summary

If you are building software and you’re not using AI tools, you need to start now.